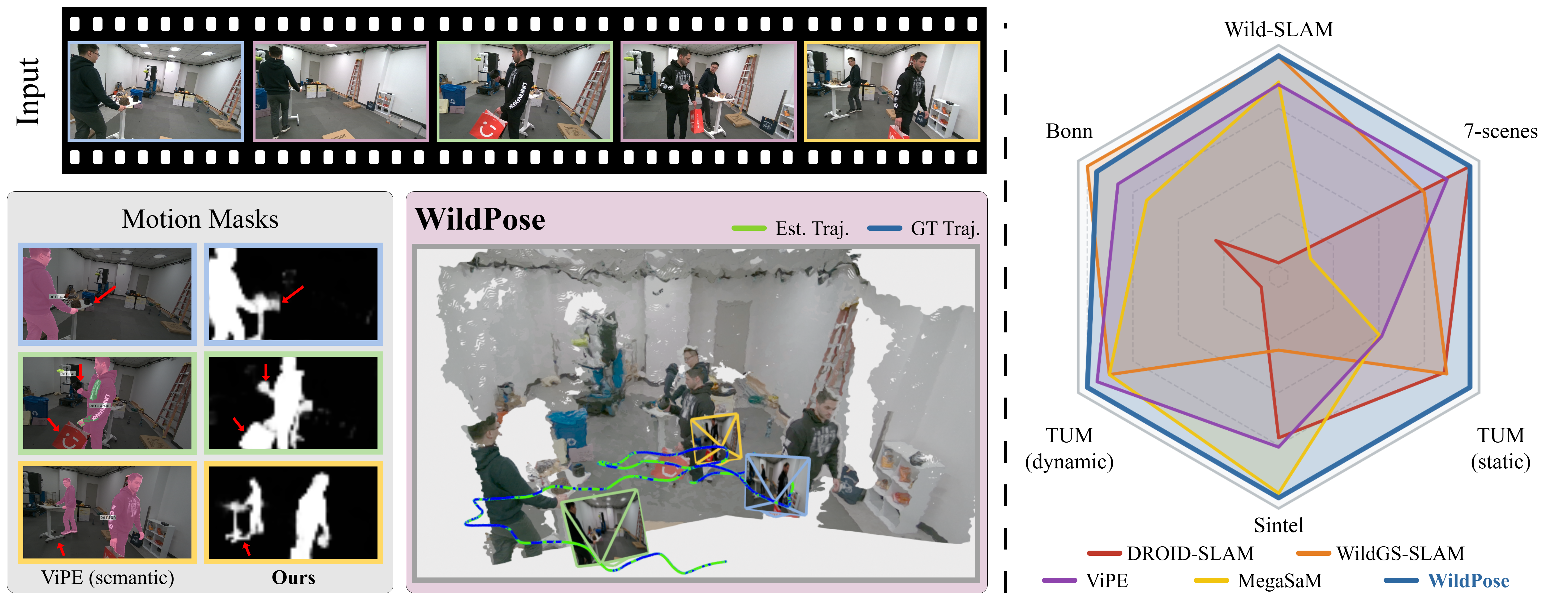

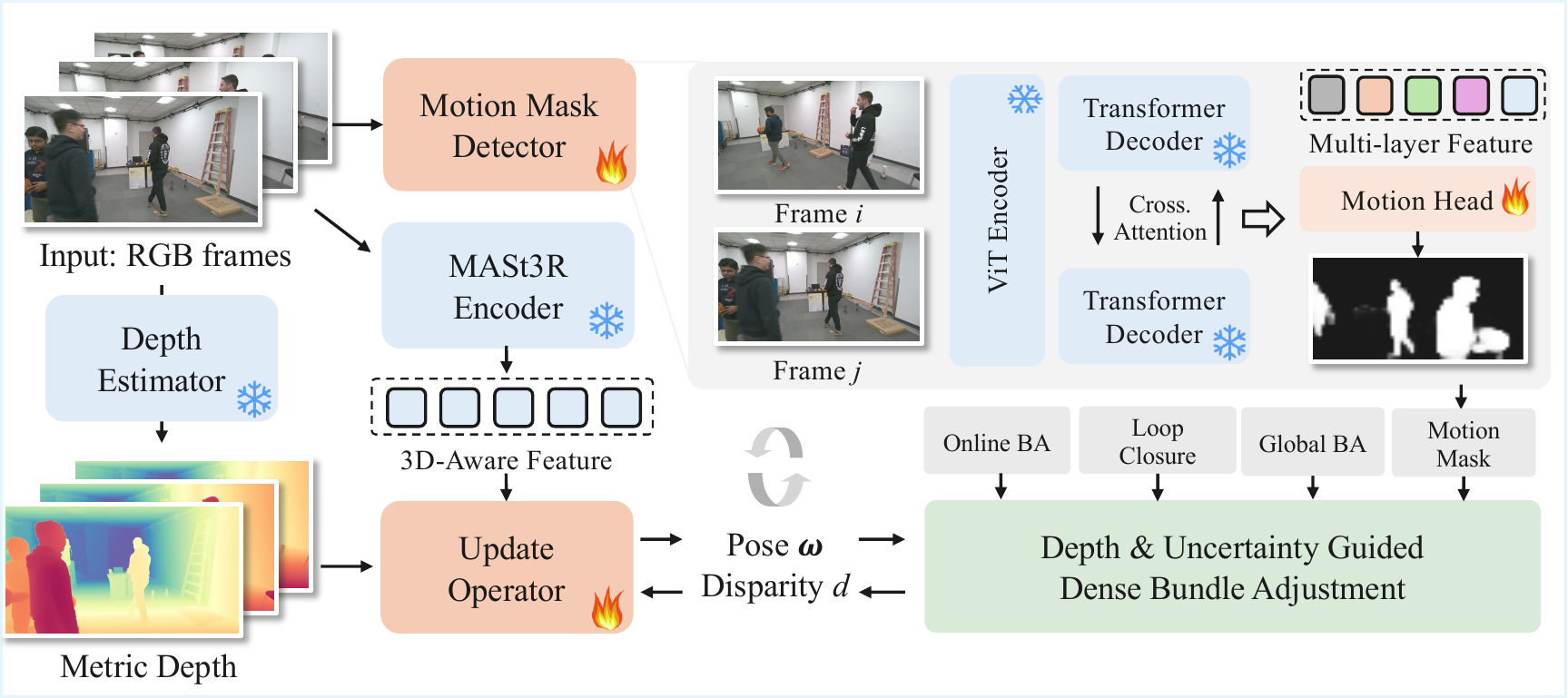

Estimating camera pose in dynamic environments is a critical challenge, as most visual SLAM and SfM methods assume static scenes. While recent dynamic-aware methods exist, they are often not unified: semantic-based approaches are brittle, per-sequence optimization methods fail on short sequences, and other learned models may degrade on static-only scenes. We present WildPose, a unified monocular pose estimation framework that is robust in dynamic environments while maintaining state-of-the-art performance on static and low-ego-motion datasets. Our key insight is to connect two powerful paradigms in modern 3D vision: the rich perceptual frontend of feedforward models and the end-to-end optimization of differentiable bundle adjustment (BA). We achieve this with a 3D-aware update operator built on a frozen, pre-trained MASt3R feature backbone, together with a high-capacity motion mask detector that uses multi-level 3D-aware features from the same backbone. Extensive experiments show WildPose consistently outperforms prior methods across dynamic (Wild-SLAM, Bonn), static (TUM, 7-Scenes), and low-ego-motion (Sintel) benchmarks.

WildPose takes a calibrated monocular RGB sequence as input and estimates camera poses through a robust differentiable dense bundle adjustment pipeline. Our method leverages 3D-aware features from a frozen MASt3R encoder, which are adapted by a lightweight update operator to predict the flow and confidence cues used for pose and disparity refinement. In parallel, a dedicated motion mask detector reuses the multi-layer MASt3R features from each image pair to identify dynamic regions. These edge-dependent masks down-weight moving distractors directly inside bundle adjustment, avoiding brittle semantic assumptions while preserving valid constraints from objects that are static for a given pair. We further initialize and regularize the optimization with monocular metric depth priors. During inference, WildPose runs online over a sliding keyframe graph with local BA, then applies loop closure and global BA to improve long-term consistency. This design combines feedforward 3D priors with geometric optimization, enabling robust pose estimation in both dynamic and static environments.

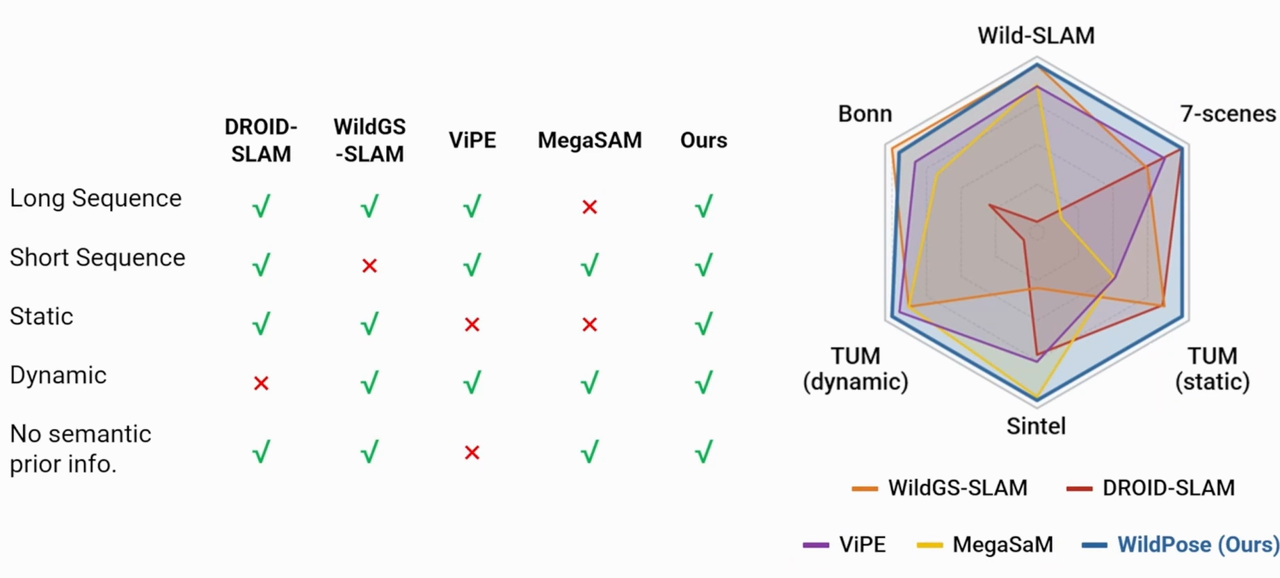

WildPose is designed to stay reliable across long and short sequences, static and dynamic scenes, and settings without semantic priors, while maintaining strong pose-estimation accuracy across benchmarks.

@inproceedings{Zheng2026WildPose,

author = {Zheng, Jianhao and Zhu, Liyuan and Zhu, Zihan and Armeni, Iro},

title = {WildPose: A Unified Framework for Robust Pose Estimation in the Wild},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2026}

}